- 0 Posts

- 12 Comments

Me too. It’s a science meme community after all.

There’s no evidence for gods though.

2111·4 days ago

2111·4 days agoGod forbid people have some self expression

They do indeed forbid it.

10 "If you go to battle against your enemies, and the LORD your God delivers them into your control, you may take some prisoners captive. 11 If you see among the prisoners a beautiful woman and you desire her, then you may take her as your wife. 12 Bring her to your house, but shave her head and trim her nails

Deuteronomy 21

Oh man, religions are batshit crazy.

59·4 days ago

59·4 days agoProbably has to be renamed to “ClosedAI” then.

Also: monoculture.

Also: monoculture of conifers which can “poison” the surrounding area due to the overload of needles falling to the ground and acidifying it. Especially problematic in vicinity to rivers, brooks or other water bodies as this can lead to “toxic flushes”. Learned that from Mossy Earth: https://www.mossy.earth/projects/riparian-restoration-glassie-farm

Shit! They found me! crawls away

5·7 days ago

5·7 days agoRebranding a Markov Chain stapled onto a particularly large graph

Could you elaborate how this applies to various areas of AI in your opinion?

Several models are non-markovian. Then there are also a lot of models and algorithms, where the description as or even comparison to Markov-chains would be incorrect and not suitable.

I feel this. Fell into a similar rabbit hole when I tried to get realtime feedback on the program’s own memory usage, discerning stuff like reserved and actually used virtual memory. Felt like black magic and was ultimately not doable within the expected time constraints without touching the kernel I suppose. Spent too much time on that and had to move on with no other solution than to measure/compute the allocated memory of the largest payload data types.

2·11 days ago

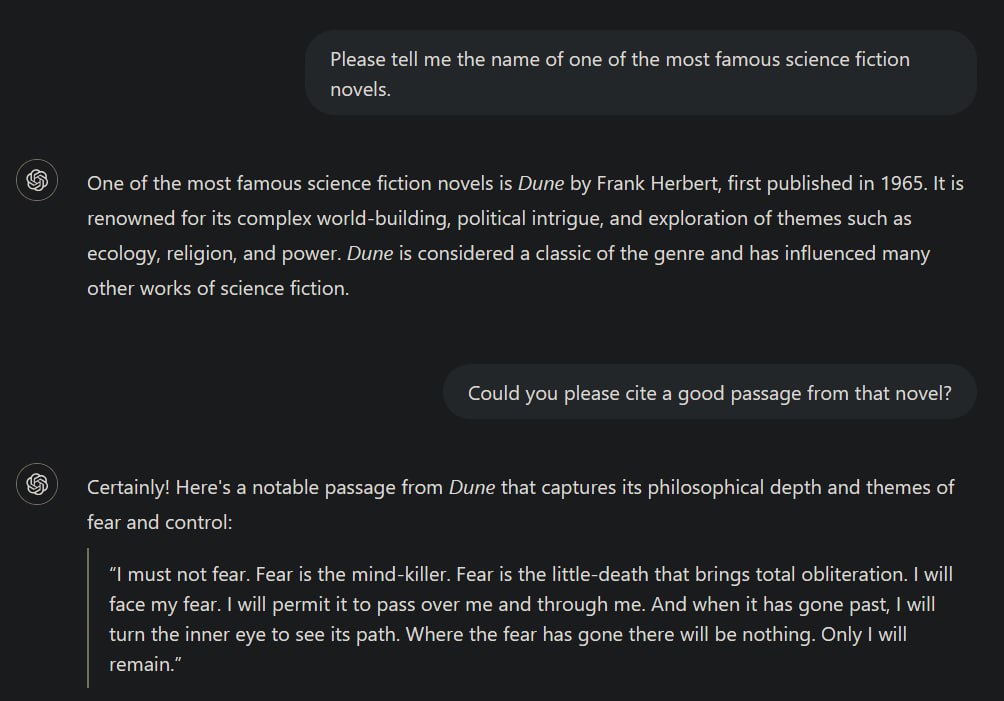

2·11 days agoMy point is, that the following statement is not entirely correct:

When AI systems ingest copyrighted works, they’re extracting general patterns and concepts […] not copying specific text or images.

One obvious flaw in that sentence is the general statement about AI systems. There are huge differences between different realms of AI. Failing to address those by at least mentioning that briefly, disqualifies the author regarding factual correctness. For example, there are a plethora of non-generative AIs, meaning those, not generating texts, audio or images/videos, but merely operating as a classifier or clustering algorithm for instance, which are - without further modifications - not intended to replicate data similar to its inputs but rather provide insights.

However, I can overlook this as the author might have just not thought about that in the very moment of writing.Next:

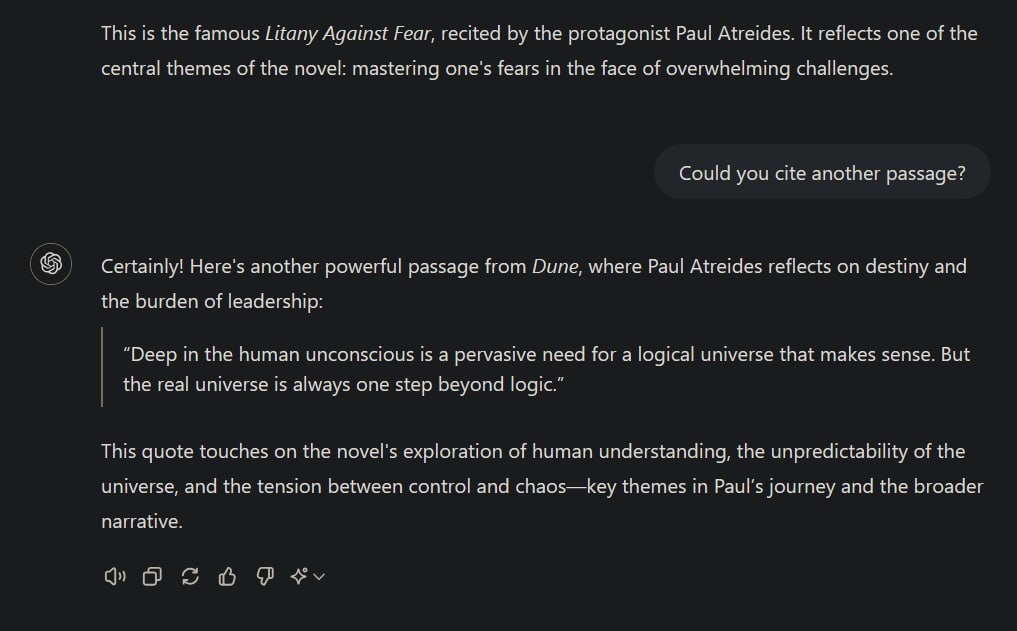

While it is true that transformer models like ChatGPT try to learn patterns, the most likely token for the next possible output in a sequence of contextually coherent data, given the right context it is not unlikely that it may reproduce its training data nearly or even completely identically as I’ve demonstrated before. The less data is available for a specific context to generalise from, the more likely it becomes that the model just replicates its training data. This is in principle fine because this is what such models are designed to do: draw the best possible conclusions from the available data to predict the next output in a sequence. (That’s one of the reasons why they need such an insane amount of data to be trained on.)

This can ultimately lead to occurences of indeed “copying specific texts or images”.but the fact that you prompted the system to do it seems to kind of dilute this point a bit

It doesn’t matter whether I directly prompted it for it. I set the correct context to achieve this kind of behaviour, because context matters most for transformer models. Directly prompting it do do that was just an easy way of setting the required context. I’ve occasionally observed ChatGPT replicating identical sentences from some (copyright-protected) scientific literature when I used it to get an overview over some specific topic and also had books or papers about that on hand. The latter demonstrates again that transformers become more likely to replicate training data the more “specific” a context becomes, i.e., having significantly less training data available for that context than about others.

235·12 days ago

235·12 days agoWhen AI systems ingest copyrighted works, they’re extracting general patterns and concepts - the “Bob Dylan-ness” or “Hemingway-ness” - not copying specific text or images.

Okay.

Fair point. Although one may say this is fine here for comic purposes.

The same argument could be made about the statement “Gods perfect creation”.

But I’d argue that the suggestion of a creationist god expands the distance to scientific contexts even more while simple speech bubbles are fine due to less ideological conflict potential.

Admittedly, I am also rather allergic to religions, which is why I am having a difficult time with that part of the meme.